The Quality Data Management Imperative

(The following blog post is the third in a series on the need for the Investment Management Industry to embrace sound data management practices. You can access the first and second posts here.)

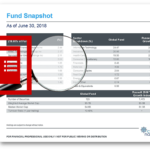

Earlier today I was on a conference call with one of the largest asset management firms in the world. The call was with marketing people representing operational groups in London, Hong Kong, Vancouver, and New York. The purpose of the call was to discuss factsheet automation and the need to unify their operations. Interestingly, we could hardly stay on topic about documents and automation tools because their real problem was source data.

During the call, each group expressed their internal challenges in collecting clean, verifiably accurate and finalized data for reporting use.

As a provider of automation solutions for marketing communication needs in this industry, we are on the receiving end of the data supply chain for a large number of investment management companies. In fact, it’s one of our primary stocks in trade; the ability to collect source data in whatever format it’s available, turn it into a clean reporting data warehouse, and then use it flexibly to drive a wide variety of communication efforts.

What is the Status Quo?

When I started in this industry, I learned I had an unrealistic expectation when it came to asset management data. I assumed asset management firms had a clean data warehouse to feed marketing and reporting processes. However, data lived in a combination of spreadsheets created on people’s desktops. Also, they were frequently in obtuse and overlapping dumps from various internal and external systems.

That’s just the way it was and, in fact, it’s still the state of the art at many firms today.

Things have improved somewhat in recent years, however. These days, the most common data answer from new clients is they do have a data warehouse, but it’s not complete. And we can expect to get maybe 65% of the data we need for marketing and reporting purposes from that system. The rest will come by way of — you guessed it — Excel spreadsheets.

Regardless of the storage and processing techniques (databases, warehouses, Excel), the most shocking thing we find missing from the asset management industry’s data processes is not a type of data or a piece of technology, it’s a comprehensive quality data management initiative.

Related: Interview with Data Quality Expert, Maria C. Villar. What is Data Quality and Why is it Important for Marketers?

Data Governance

While terms like “data quality”, “data management”, and “data governance” seem self-defining and even interchangeable, in most companies they are treated as token terms to hang on just about any database project. However, there is actually a well-refined and formal set of tools, standards, and practices in these areas. Enterprises can embrace these if they truly seek to improve their data-related business risk-factors.

As an example; Data governance refers to the overall management of the availability, usability, integrity, and security of the data employed in an enterprise. A sound data governance program includes a governing body or council, a defined set of procedures, and a plan to execute those procedures.

If you’re part of the investment marketing world, this term is probably completely new to you.

Marketers as Data Aggregators

The company I was speaking with on that conference call was no different than most in the industry. The operating expectation is that the marketing operations group will collect data from multiple systems and vendors, consolidate and normalize the data as may be necessary, somehow verify its accuracy and then move those numbers into documents.

As with most firms, their concept of enterprise data quality and data governance is practically non-existent. Oh, I’m sure that deep in the enterprise IT and risk management groups they use the term to justify various expenditures, but on the street where the marketing department lives, they get their data in haphazard forms and formats. This leaves the onus on the marketing team to figure out what is the authoritative information and how to QA it.

The Trickle-Down Effect of Bad Data

In the last two decades, we’ve encountered very few internal IT organizations that provide truly quality-controlled data. Specifically, ones with real data ownership and stewardship concepts to support vital marketing and sales communication.

They support quality data for statements; holdings, trades, shares and values, but support for other marketing and reporting functions is haphazard at best. Once the trades are closed and the accounts balanced, it can be extremely hard to find someone who will take actual ownership for the extended reporting data sets of holdings, composition, categorizations, statistics, expenses and the myriad ways that the data has to be sliced and diced and reported.

One fund company recently related to me that their internal data warehousing team was going to cease providing bespoke reports on data to them as a service. The marketing group would then have to go to a data warehouse web interface and create their own reports. Sure, this kind of self-serve reporting is technically feasible, but it’s completely lacking in auditable controls and ownership. Or, as the marketing director put it, “We have no idea what data we’re pulling or if it’s final, complete, or will be the same if we pull it again tomorrow!”

This problem gets magnified with any firms selling investment products more complicated than basic funds and ETFs. Strategy products that blend and combine investments from many different sources and of many different types tend to expose the biggest challenges in the industry related to data aggregation, quality control, and reporting.

Related: West-Coast TurnKey Asset Management Provider Conquers Complex Data Scenario

The formula for quality data management

So, how do you create good quality data management practices within an asset management firm in support of marketing operations?

There are really four principal factors:

- Ownership – someone has to own every reportable data point and understand its origins, purpose, and validity. If something changes they need to introduce the changes to the firm’s data management, compliance, and communications teams.

- Controls – a system has to be put in place that creates auditability of the data. Where did it come from, how was it then calculated, transformed or combined? How do you know the process was both accurate and consistent?

- Availability – access to the data in any officially sanctioned storage location is often a secret. People don’t know where it is, how to get it, or what services the data group can provide…or when. They solve this problem by going back to some spreadsheets that circumvent the process.

- Active Warehouse Maintenance – This is a very common trap. IT groups building corporate data resources like to fire and forget. They build the database and the import/export utilities. Then, the team “rolls off” onto other projects, as if the state of the data sources and uses is invariable…which, of course, it isn’t.

I’m continually shocked during the data on-boarding stage of our projects how frequently some version of the following happens:

There’s a data miss-match of some sort. After re-checking our loaders and datamaps and digging around the source data files, the client finally says, “Oh, you’ll need this additional lookup table.” Or, “oh, you can’t use that data from the data mart, here’s a spreadsheet that’s more up to date.”

This is an illustration of failure in all four of the principal factors listed above. There was clearly no ownership of the data point, maintenance of the data mart, or controls. Therefore, it wasn’t ensured that clean accurate information was made available to the ultimate user of the data.

It’s not the crime, it’s the cover-up

Forgive this overly dramatic heading. I’m not trying to fear-monger. As a publisher of investment data, your liability for publishing incorrect data is minor compared to your liability for having irresponsible data management practices.

Fortunately, there are vendors, tools, and educational resources that companies can avail themselves of to jump ahead with a strategy and facilities supporting data quality management. If you’re interested in changing the status quo at your firm, just drop me a line or connect with me on LinkedIn. I’m always happy to help. – John

Did you like this post? Please share! You can also subscribe to our blog.

Here are some related resources that might interest you:

Compare the Top 3 Finserv Content Automation Vendors [White paper]

Compare the Top 3 Finserv Content Automation Vendors [White paper] Create Pitchbooks the Drive Sales [White paper]

Create Pitchbooks the Drive Sales [White paper] Build vs. Buy: Should Your Financial Services Firm Outsource or Insource Marketing Technology? [White paper]

Build vs. Buy: Should Your Financial Services Firm Outsource or Insource Marketing Technology? [White paper]  10 Tips for Rebranding your Fund Marketing Documents [White paper]

10 Tips for Rebranding your Fund Marketing Documents [White paper]